Part 1: Introduction

AI is useful for charities now. Not in theory, not with caveats, not in 2027. Practical applications work and most organisations can start getting value out of them today. That's a shift from when we wrote the first edition of this playbook a year ago, when a lot of charity leaders hadn't tried AI themselves yet. Most now have, and the conversation has moved on from "should we?" to "where does this actually help?"

In the past year we've worked with charities doing things with AI that nobody would have expected two years ago. We've also spent time with organisations still trying to figure out where to start. Both of those experiences shaped this book. If you're already well into your AI journey, there's plenty here on the harder organisational questions. If you haven't started yet, it's never too late, and the starting points are easier than they were a year ago.

Here's what we've learned. The organisations getting the most from AI don't start with the technology. They start with something they already understand well: feedback they can't get through fast enough, donors they're losing and not knowing why, case notes that sit unread because nobody has time. AI turns out to be good at exactly that kind of work. But it only gets you somewhere useful if someone who understands the problem is driving it.

The harder bit is organisational. It always is. The technology mostly works. But changing how people work, getting data into shape, that's the real job, and it's not a new problem.

This technology is moving fast enough that nobody can predict the detail of where it goes. But that's not a reason to wait. The organisations getting the most from AI started with small experiments and built from there. You can do that at any point, and the starting points are better now than they were a year ago.

This edition is structured around how charities actually work. Parts 1 to 3 cover the context and the organisational questions that apply regardless of what you're using AI for. Then you'll find practical guidance by function: fundraising, service delivery, operations, impact measurement, communications, and data. Each section includes recipes for specific problems, available as step-by-step guides at AI Recipes for Charities. Start with a problem you have, find the matching section, and see what's involved.

Pick a problem. Try a recipe. See what you learn.

1.What's changed since the first edition?

The most significant change is direct experience. When we wrote the first edition, many charity leaders either hadn't used generative AI themselves, or were using it infrequently. Now almost everyone has tried it, formed their own impressions, and is asking harder questions about where it adds value.

The technology has moved fast, fast enough that changes which would normally take years have happened in months. A year ago, AI was good at generating text when asked. Now it can sustain complex, multi-step work: building applications, analysing datasets, managing workflows across multiple systems. Things that required a developer six months ago can now be done by someone who understands the problem.

Last year we wrote about how, "AI is also now multimodal - you can upload images, documents, spreadsheets and audio files for analysis", which seems a bit quaint now. The tools have stretched so far beyond the it-can-also-look-at-pictures-now that what felt on the edge of things last year already feel routine.

AI has become part of the standard tools charities use: Microsoft 365 Copilot, Salesforce Einstein, features within Google Workspace. Many organisations are paying for AI without consciously adopting it. Whether that's delivering value is another question.

AI now takes actions, not just generates content. These "agentic" systems browse the web, send emails, update databases, and complete multi-step tasks. They're in the tools charities already use, and people are adopting standalone agents independently, often before their organisation has a policy for them. We cover agentic AI in more detail later.

The sector context has shifted too. Financial pressures have intensified resulting in redundancies, funding cuts, increased demand. AI could help stretched teams do more, but implementing it requires investment that feels impossible right now. We've tried to address this with practical advice and implementation guides.

2.The pace is not slowing down

The capabilities available now are well beyond what existed when we wrote the first edition of this playbook, and that was only a year ago.

The recent leaps have been particularly striking. Tools like Claude Code can now build functional applications, create internal tools, and automate workflows without deep technical expertise. AI can write, test, and debug code across entire projects, not just suggest snippets. To give one example: Hearing Dogs for Deaf People used AI-assisted prototyping to test three new revenue concepts in a three-week sprint, producing financial projections, mock product photography, and functional websites. None turned out to be viable - but finding that out quickly and cheaply, before committing real investment, was the point.

This isn't just about coding. The models themselves have become substantially more capable at reasoning, at handling complex tasks, at working with large amounts of information. Features that felt experimental a few months ago are now reliable enough to build on.

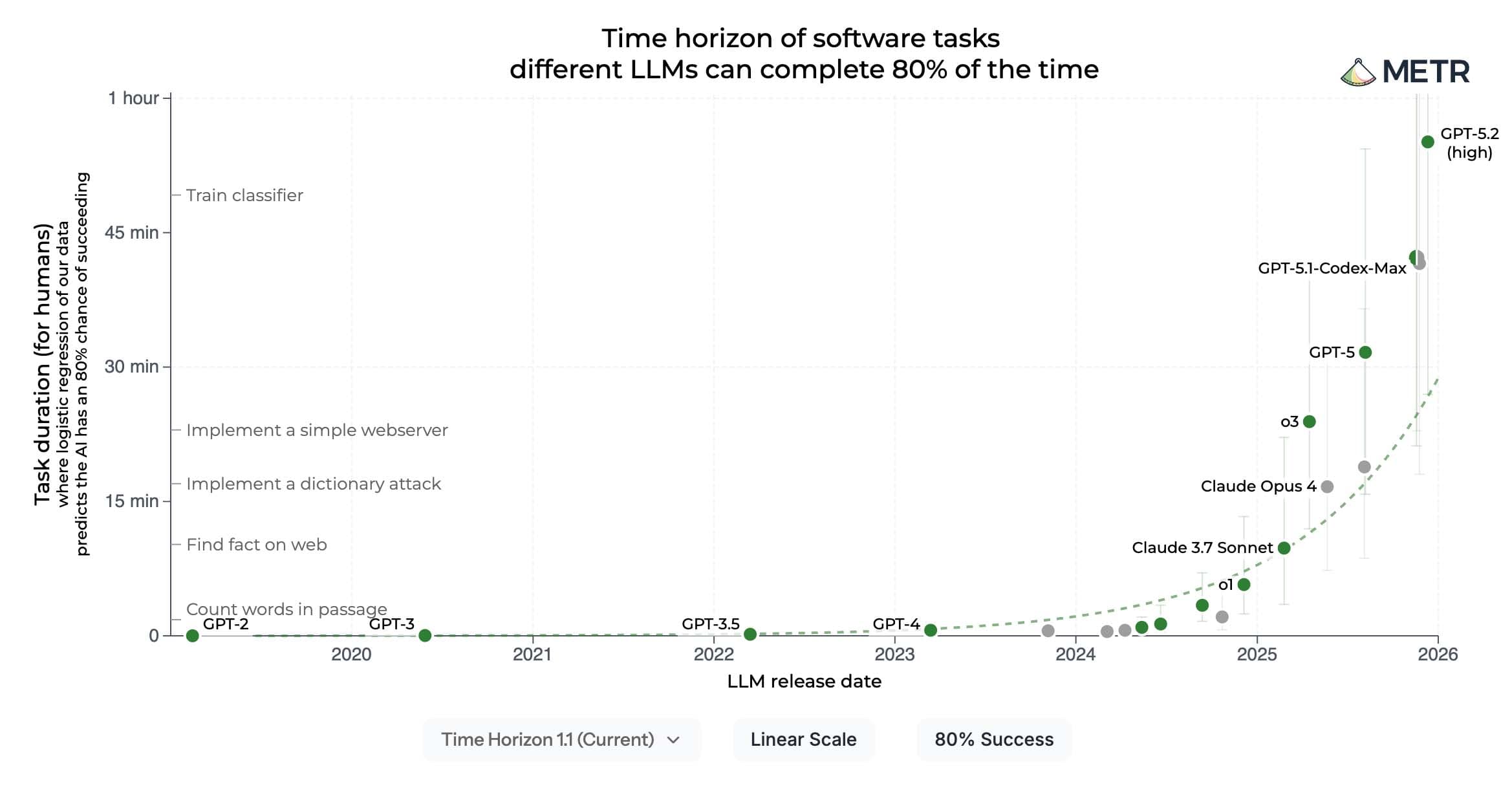

One way to see how much has changed: the research organisation METR tracks how long AI can work independently on real tasks that require judgment and problem-solving. In mid-2024, the best models could reliably handle tasks of about three to five minutes. By early 2025, that had tripled to around fifteen minutes. By late 2025, it had reached roughly half an hour — all at the stricter 80% reliability benchmark METR tracks. The broader trajectory is exponential, with capability at a 50% reliability benchmark doubling roughly every seven months (METR).

For charities, this creates a genuine challenge. How do you plan when things shift this quickly? How do you make investment decisions when the tools might be different in six months? There's no easy answer, but the organisations navigating this well tend to share some characteristics: they have someone watching the horizon, they build in regular review points, and they hold their goals firmly while staying flexible about how they get there.

The risk isn't just moving too fast. It's also moving too slow - waiting for things to settle when they might not settle for years, and falling further behind organisations that are learning by doing.

The risk isn't just moving too fast. It's also moving too slow - waiting for things to settle when they might not settle for years.

3.The enthusiasm isn't universal

Not everyone is convinced. A quarter of UK workers fear losing their jobs to AI in the next five years (Acas). Forty percent of knowledge workers say they'd be fine never using it again (Section, January 2026). The anxiety isn't unfounded: over 50,000 job cuts in 2025 were directly attributed to AI, and the tech sector has pivoted to AI adoption faster than any other (Challenger, Gray & Christmas). The roles most affected are those involving routine information processing.

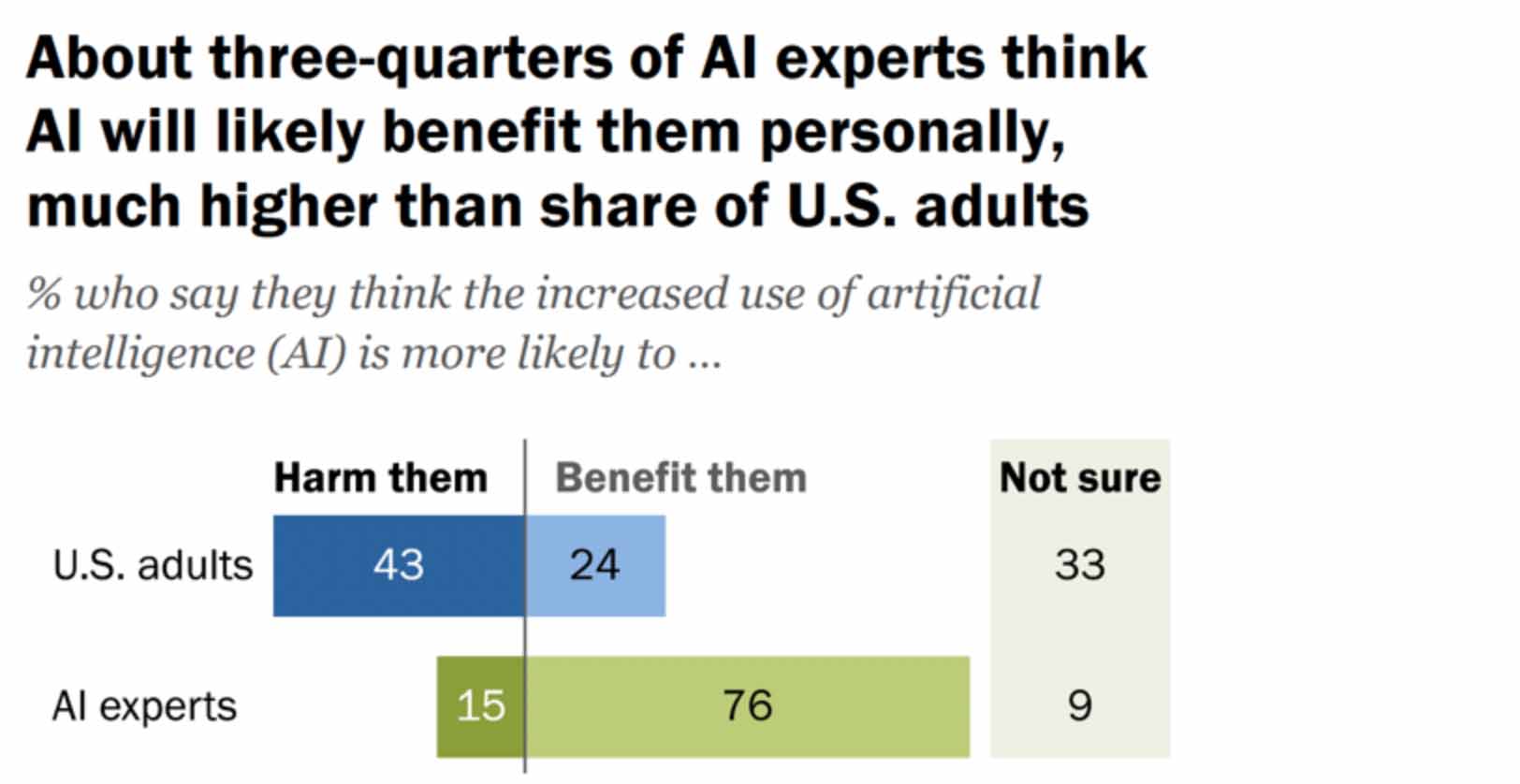

The gap between how experts and the public see AI is pretty lopsided. Seventy-six percent of AI experts believe the technology will benefit people, but only 24% of the general public agree. Forty-three percent think AI is more likely to harm them than help them (Pew Research, 2025). Obviously, this matters for charities because your beneficiaries and supporters are part of that public, not the expert group.

The gap between leadership and staff is striking. Forty percent of C-suite executives report saving eight or more hours a week with AI. Two-thirds of non-management workers say they're saving less than two hours, or nothing at all (SectionAI, January 2026). People are being asked to adopt tools that might make their roles redundant, while also being told those same tools will make their work better. That's a difficult message to reconcile.

There's also some scepticism about whether AI is delivering on its promises. Microsoft has cut Copilot sales targets. Gartner predicted in 2024 that 30% of generative AI projects would be abandoned after proof-of-concept by the end of 2025 (Gartner, July 2024). Talk of an AI bubble is now constant.

Much of the big investment is chasing artificial general intelligence: systems that could match human capabilities across all domains. That may or may not arrive. But the practical applications in this playbook, using AI to analyse feedback or forecast demand, are more modest. They work now, and they don't depend on the next breakthrough.

The practical applications in this playbook work now, and they don't depend on the next breakthrough.

4.Infrastructure dependency and the environmental impact

The dominant AI tools are American: OpenAI, Anthropic, Google, Microsoft. Most charities already depend heavily on US-controlled infrastructure for email, cloud storage, CRM, and collaboration tools. AI adds another layer to an existing dependency.

With an unpredictable US administration, this carries risks that would have seemed abstract a few years ago. What happens if policy changes affect access, pricing, or data handling? There's no easy answer, but this is probably a conversation for board level: understanding where your organisation is building dependency on infrastructure you don't control, and what that means for resilience.

The environmental impact of AI is real, and it's growing. Charities with sustainability commitments, and those working on environmental issues, need to understand what's happening, even if individual organisational use is a small part of the picture.

The scale of the problem

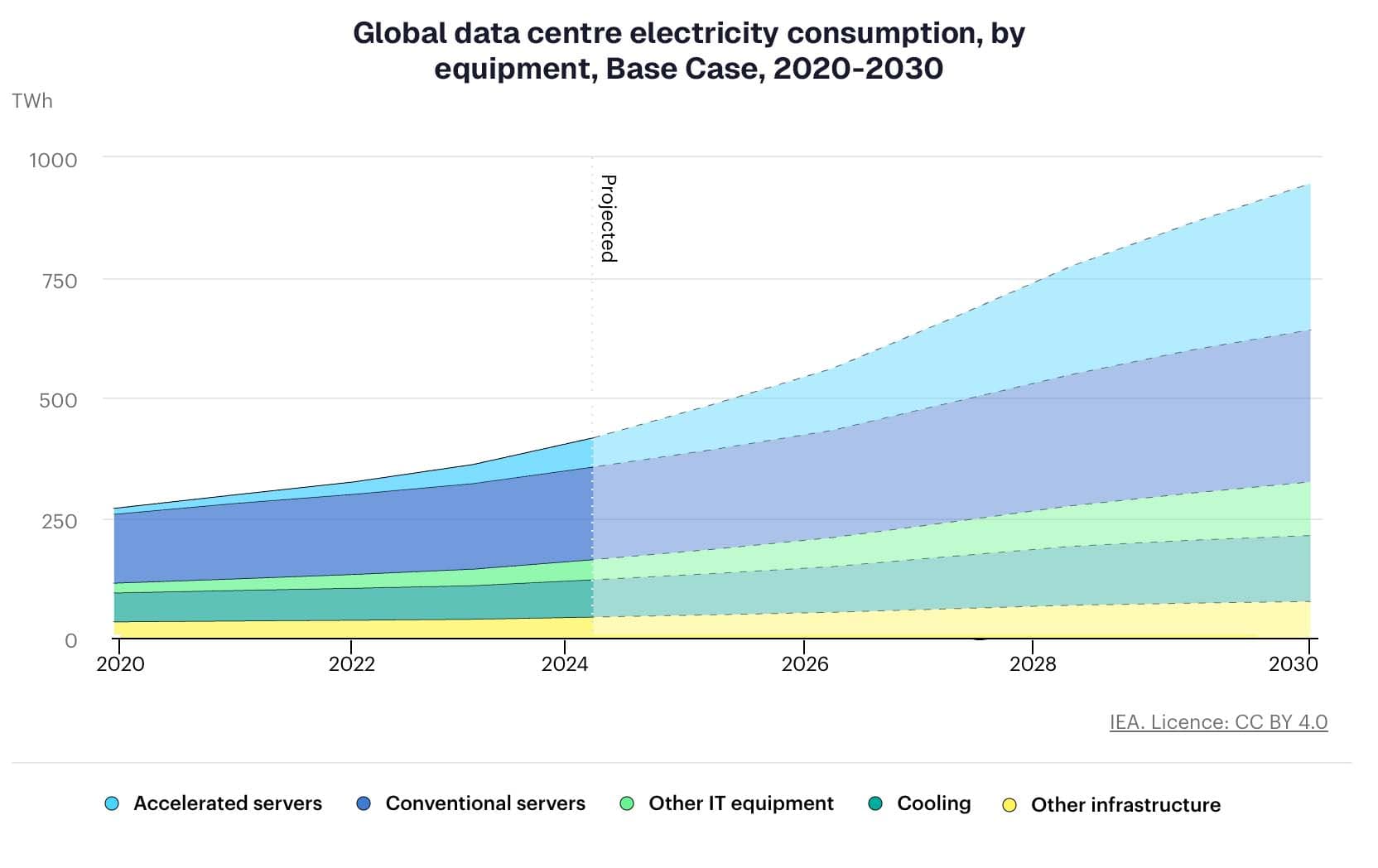

Data centres currently consume around 415 terawatt-hours of electricity globally, roughly 1.5% of all electricity used worldwide. The IEA projects this will more than double by 2030, reaching 945 TWh, equivalent to the entire electricity consumption of Japan. AI is the main driver of that growth, with electricity demand from AI-optimised data centres expected to quadruple by the end of the decade (IEA).

Water is a growing concern too. Data centres need large volumes for cooling, and consumption is rising fast - a single large data centre in Iowa used a billion gallons in 2024 (Google). In absolute terms the current scale is small: AI's direct data centre water use is less than 0.01% of US freshwater consumption (all US data centres combined are closer to 0.04%), and a single AI prompt uses about two millilitres (Masley, 2025). But the growth rate matters, particularly in regions already under water stress where data centres compete with homes and farms for supply.

Here in the UK, data centre expansion is putting pressure on both power networks and water supplies, particularly in South East England. Thames Water has flagged the significant volumes of water required for data centre cooling in the region. The Royal Academy of Engineering published a report in 2025 calling for mandatory environmental reporting by data centres and urging the government to embed sustainability as a criterion in AI policy and procurement.

The tension for environmental charities

There's a particular irony for charities working on environmental issues. AI can help you do your work better, analysing satellite imagery, modelling climate impacts, processing environmental data at scale, as Surrey Wildlife Trust's Space4Nature project demonstrates. But using it contributes to the very problem you're trying to solve.

This isn't a reason to avoid AI. It's a reason to be deliberate about when and how you use it, and to be transparent about the trade-offs. The same applies to any charity with net zero commitments or sustainability policies. AI should be part of those conversations, not exempt from them.

Individual charity use of AI is a tiny fraction of global AI energy consumption. The systemic questions (how data centres are powered, where they're built, how they're regulated) are policy questions, not organisational ones. But charities have a voice in those conversations, and environmental charities in particular have a responsibility to engage with them. The UK government's AI Opportunities Action Plan, published in January 2025, contained only a single passing reference to the "sustainability risks" of AI infrastructure, with no further engagement with climate, emissions, or water impacts. That's a gap worth pushing on.

We cover what this means practically for your organisation, including how to factor AI into sustainability reporting, in Part 2.

5.We can see (some) of the consequences now

When we wrote the first edition, much of the discussion about AI's impact was speculative. Now we’ve all witnessed the changes happening.

People are using AI for support. According to a Harvard Business Review analysis of thousands of online discussion posts, "therapy and companionship" is now the number one use case for ChatGPT, ahead of "organise my life" and "find purpose" (HBR, April 2025). AI is available at 2am, doesn't have a waiting list, doesn't judge, and responds immediately.

The tech industry is responding to this demand, developing AI products specifically for emotional support. And there's mounting evidence it can help: eight weeks of regular use of Therabot, a chatbot created by researchers at Dartmouth College, reduced symptoms in users with depression by 51% (NEJM AI, March 2025). Many participants reported feeling the chatbot cared about them.

Whether this is good or bad is complicated. AI might give inaccurate advice or miss safeguarding concerns. But when waiting lists are months long and services are overstretched, people are turning to what's available. For charities providing advice or support services, this raises difficult questions. Should you build your own AI-powered support, use AI to extend what you already offer, or focus on what AI can't do?

How people find information is changing. AI-generated answers now appear at the top of search results. People ask ChatGPT questions they would previously have typed into Google. The direction is clear: AI summaries can cause an 18-64% decline in organic traffic (Bounteous), and around 69% of searches now result in no clicks at all as users get what they need directly from the search engine, up from 56% a year earlier (Similarweb).

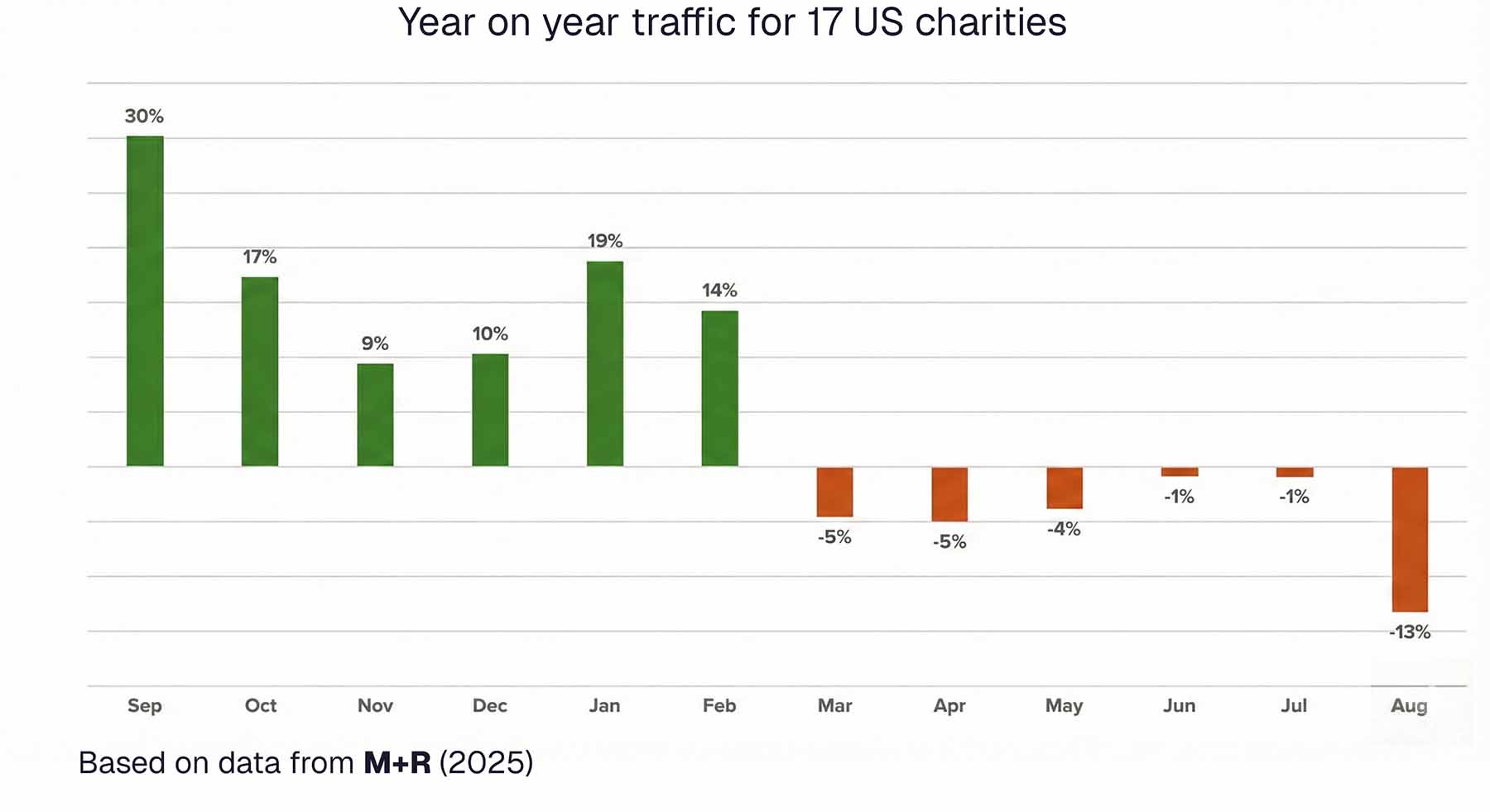

For charities, the impact is already measurable. Between 2024 and 2025, charity website traffic growth slowed from 15% to 12.5%. More concerning: charities experienced the single biggest drop in ranking pages of any sector analysed, falling 28.9% (Tank, October 2025). AI Overviews now appear in nearly one in five searches, around 18% (Pew Research), and when they do, click-through rates drop by roughly 30% (BrightEdge). Google Ad Grants campaigns report average drops of 47% in click-through rates and 60% in actual clicks (Torchbox). A study of 17 US nonprofits found organic traffic down 13% despite brand name searches increasing by 19% - people are looking for these organisations, they're just not landing on their websites (M+R).

The shift is changing how people look for charities too. They're no longer searching "donate to homeless charity." They're asking: "Which charities are actually making a difference for homeless people?" AI systems answer by reading and synthesising information across the web. Whether your charity is part of that answer depends on choices you're making now about your digital estate. For charities that exist to provide information and support, this is a strategic question. If people increasingly get answers from AI instead of from you, what does it mean for your role? We address this in detail in Part Two.

Knowledge itself is being shaped. AI-generated content now makes up a significant proportion of what's published online. Synthetic voices narrate podcasts. AI-generated images illustrate articles. As AI systems increasingly mediate our access to information, there's a risk that understanding of issues gets filtered or distorted in ways that are hard to detect.

6.Technology might be catching up with our problems

One of our observations from last year is that technology might actually be catching up with some longstanding organisational problems. AI is particularly good at tasks that used to require either significant human time or specialist expertise: making sense of unstructured text, cleaning messy data, processing documents at scale, spotting patterns across large volumes of information. These are exactly the bottlenecks many charities have lived with for years because the solutions weren't affordable or accessible.

This doesn't mean AI solves everything. But it does mean some problems that felt intractable might now be worth revisiting.

The most important thing you can bring to AI isn't technical knowledge, it's deep understanding of your own work. The person who's been running your helpline for ten years, the fundraiser who knows why certain appeals work, the programme manager who understands why cases get stuck are the people who can identify problems worth solving.

The most important thing you can bring to AI isn't technical knowledge, it's deep understanding of your own work.

7.AI literacy or AI solutions?

Should you be investing in AI literacy across your organisation, or in specific AI solutions that solve problems? The honest answer is probably a bit of both.

Generic AI literacy has limits. Training everyone on prompting techniques or how large language models work doesn't automatically translate into better fundraising, better service delivery, or better data management. The number of use cases for AI and the types of applications are vast. We identified 89 recipes for this collection and could easily have found hundreds more. Expecting anyone to understand all the applications is unrealistic.

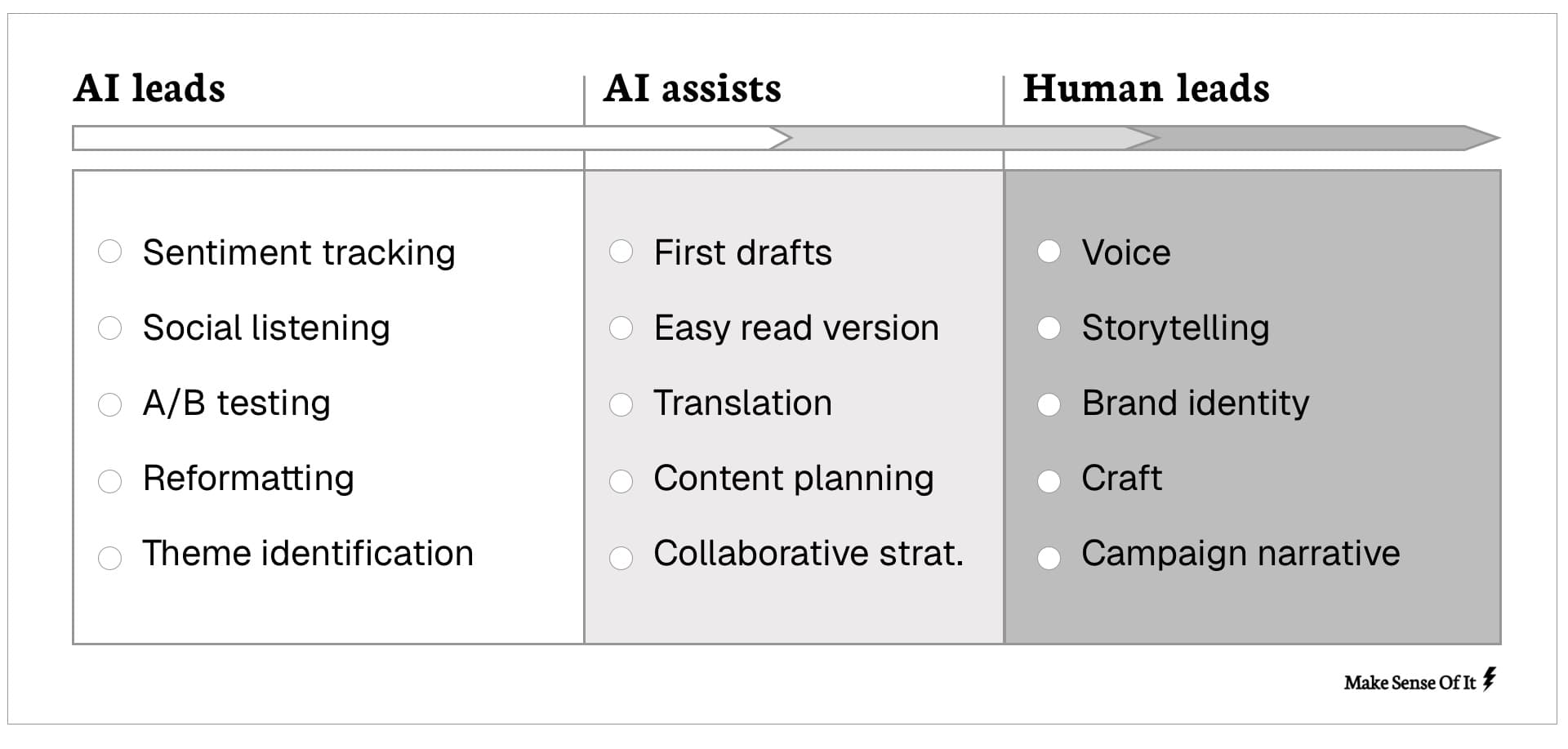

What matters more is domain-specific understanding. Fundraisers don't need to understand AI in theory but they do need to understand what AI can do for fundraising: personalising appeals, spotting lapsing donors, analysing campaign performance. Service delivery teams need to understand the capabilities of AI for triaging enquiries, summarising case notes and scaling support. The recipes are organised by function for exactly this reason.

For individual productivity, people do need enough literacy to use the tools themselves: knowing what AI is good at, where to be sceptical, and how to get useful outputs. This comes from experimenting with real work in their own domain, not from generic training. The National Fire Chiefs Council found this when building AI capability across UK fire services. Generic AI training would have meant nothing to fire professionals. What worked was connecting AI to their actual operational challenges: analysing building inspection reports, improving fire detection, making better resource decisions. Sixty professionals came away able to evaluate AI tools critically and engage with vendors from a position of knowledge rather than uncertainty.

For embedded workflows, literacy probably matters less. When AI is implemented well, the team using it doesn't need to know anything about AI. Breast Cancer Now's survey transcription system handles 20+ different NHS trust form variants. The team interacts with it as part of their normal workflow. They don't need to understand what's happening underneath. They're experts in their programme and the investment was in building the solution, not in building everyone's AI skills.

The measure of success isn't necessarily how many people have been trained. It's whether the work is better.

The measure of success isn't how many people have been trained. It's whether the work is better.

8.The shift to agentic AI

Until recently, most AI use in charities was conversational. You'd ask a question, get an answer, paste in a document and request a summary. The AI generated content, but you decided what to do with it.

Agentic AI works differently. Instead of generating responses, it takes actions: sends emails, updates databases, books appointments, moves files, executes code, browses the web. You give it a goal, and it figures out the steps to achieve it, choosing which tools to use along the way.

Agent capabilities are now part of the tools charities use: Copilot in Microsoft 365, Agentforce in Salesforce, features in your CRM that take actions on your behalf. If you've seen Copilot offer to send an email or book a meeting, you've already used agentic AI. Watching AI actually do things rather than just say things can feel genuinely strange. We've been working with this technology for a while now and it still catches us off guard sometimes.

The adoption has been rapid. OpenClaw, an open-source AI agent that connects to email, messaging, and calendars, was built by a single developer and went from a weekend project to 145,000 GitHub stars in ten weeks. Anthropic's Agent Teams feature coordinates multiple AI agents on shared work. Gartner predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025 (Gartner, August 2025).

Multi-agent systems take this further. Rather than one AI agent working alone, multiple agents coordinate with each other: one researching, another drafting, a third reviewing. Anthropic's published research shows multi-agent systems outperforming single agents by 90% on research tasks (Anthropic). This matters for governance because when agents coordinate with other agents, not just with humans, traceability and oversight become harder still.

Building custom agents that connect to your specific systems still requires technical expertise, but general-purpose agents like OpenClaw are accessible to non-technical users. The gap between what requires engineering and what someone can set up themselves has narrowed significantly. Many organisations are encountering agentic AI through their existing tools without consciously deciding to adopt it.

The opportunity

Agentic AI is already taking on administrative work that absorbs disproportionate time: chasing information across systems, copying data between tools, sending routine updates, compiling reports from multiple sources. The NHS Copilot trial (covered in the operations section) showed staff saving 43 minutes a day on exactly this kind of work. That's real capacity being freed for work that needs human judgment.

The risks are different

But the risks are different from conversational AI. When AI is just suggesting text, you can ignore bad suggestions. When AI is taking actions, the consequences are more immediate.

When AI is just suggesting text, you can ignore bad suggestions. When AI is taking actions, the consequences are more immediate.

Accountability becomes harder. If an agent sends an email to a service user, updates a case record, or routes an enquiry to the wrong team, who's responsible? The person who set it up? The organisation? The AI provider? These questions don't have clear answers yet.

Traceability matters more. With conversational AI, you can see the exchange: what you asked, what it said. With agents taking multiple steps across multiple tools, understanding what happened and why becomes harder. If something goes wrong, can you reconstruct the chain of decisions?

Trust boundaries need defining. What should an agent have access to? Your email? Your CRM? Your finance system? The more access you give, the more useful it becomes, but also the more damage it can do if it goes wrong. Function calling hallucination is a real risk: the AI confidently using the wrong tool, or using the right tool incorrectly.

Errors compound. With conversational AI, a hallucination affects one response. With agentic AI, inaccurate information held in an agent's memory can affect multiple decisions over time. One wrong assumption cascades through subsequent actions.

Existing risks get amplified. Bias, hallucination, privacy concerns: these all become more serious when AI is acting, not just advising. An AI that gives biased advice is a problem. An AI that takes biased actions at scale is a bigger one.

Multi-agent complexity. When multiple agents coordinate, as they increasingly can, the traceability problem multiplies. Each agent may make reasonable decisions individually, but the interactions between them can produce outcomes none was designed for. Debugging what went wrong means understanding not just what each agent did, but how they influenced each other.

What the regulators are saying

The Information Commissioner's Office published early thinking on agentic AI in January 2026 (ICO, January 2026) — explicitly framed as a Tech Futures piece rather than formal guidance, but the message is clear: despite the language of "agency" and "autonomy," organisations remain fully responsible for data protection compliance. The AI might be taking actions, but the accountability sits with you. Governance frameworks, however, have not kept pace with the speed of deployment. The gap between what agents can do and what regulators have guidance for is widening.

Some specific concerns for charities:

Purpose limitation. There's a temptation to give agents broad access to data so they can be more helpful. But "connect to everything and see what helps" conflicts with GDPR requirements to collect and use data for specified purposes. You need tight controls on what data agents can access, not open-ended permissions.

Inferring sensitive data. Agents may infer health conditions, support needs, or other special category data even when not explicitly given it. If you're working with vulnerable beneficiaries, you need to assess whether agents could infer sensitive information and either establish proper legal basis or implement technical measures to prevent it.

The right to explanation. When AI is involved in decisions that significantly affect people, they have rights to understand how those decisions were made. With agents taking multiple steps across multiple tools, providing meaningful explanations becomes harder.

Part 2: Charity digital in the age of AI

9.The website is no longer your front door

The fundamental shift

For twenty years, charity websites have been designed as destinations. You optimised for search engines that would send people to you. You measured success by traffic, time on site, bounce rates. The website was your digital front door.

That model is breaking down. You might rank first, but if AI summarises your content, people won't click through. You're being read, but not visited.

You might rank first, but if AI summarises your content, people won't click through. You're being read, but not visited.

The shift runs deeper than how people find charities. Many charities have become trusted content providers, creating health information, mental health guidance, rights advice, support resources with proper content standards and regular review processes. People are increasingly turning directly to AI for this content instead. The carefully reviewed charity expertise still informs what AI knows, but the trust relationship is lost.

Why charities are especially exposed

Charities are performing better than most sectors on raw traffic, largely because people searching for charity information are often intent-driven: they want to donate, volunteer, verify a cause. Those searches still result in clicks, for now. Across all industries, traffic growth collapsed from 26.3% to 3.7%, while charities slowed from 15% to 12.5% (Tank, October 2025).

But the content most affected is exactly the kind charities produce most: high-trust informational content explaining complex issues, educating people about conditions, clarifying rights and entitlements. If your content explains, educates, or provides information, Google's AI increasingly wants to summarise it directly rather than send people to you.

Google Ad Grants under pressure

For many smaller charities, Google Ad Grants have been the only realistic way to compete for digital visibility. The programme provides up to $10,000 per month in free advertising, and it changed how those organisations reached people online.

But the impact of AI Overviews on Ad Grants is severe. Lower click-through rates damage quality scores, making ads more expensive to run and less likely to appear prominently. Even when they do appear, they're often positioned below the AI Overview, competing for scraps of attention. The free advertising that levelled the playing field is becoming less effective, and organisations with paid advertising budgets are better positioned to adapt.

From keywords to questions

People used to search: "donate to children's charity." Now they're asking: "Which children's charities are actually making a difference?" "How much of my donation reaches the children?" "What's the difference between Save the Children and Barnardo's?"

They're not looking for your homepage. They're looking for answers. AI systems reward content that provides clear, direct, well-structured answers. The old SEO playbook focused on keywords and backlinks. The new reality prioritises what Google calls E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness.

Charities actually have an advantage here. You have experience because you're doing the work directly. You have expertise because your staff and volunteers understand the issues deeply. But that advantage only materialises if your digital content demonstrates it in ways AI systems can understand and reference.

The agentic web

Today, AI mostly summarises information and occasionally takes simple actions. But the shift toward AI that completes tasks is already underway. A supporter can now say to their AI assistant: "Donate £50 to a homelessness charity in Bristol." The AI browses the web, assesses options, and completes the donation without the supporter ever visiting a website. Or: "Sign me up to volunteer with an environmental charity on Saturdays." The AI finds options, compares them, and submits the application on the user's behalf. These scenarios are working in limited contexts today.

This is developing faster than most predicted. The building blocks - AI agents that can browse, evaluate, and act on behalf of users - are already functional. Nobody knows the exact adoption curve, and predictions about technology timelines are notoriously unreliable. But the gap between "possible in a demo" and "available to ordinary users" has narrowed sharply, and the direction is consistent enough that it's worth preparing for now rather than waiting.

If AI becomes a significant interface between your charity and the people you serve, your digital infrastructure needs to work for AI agents, not just human visitors. That means structured data, clear service descriptions, and information that machines can parse reliably, not just content that reads well to humans.

Competition for AI visibility

If AI becomes the primary way people discover charities, being invisible to AI is being invisible to donors. This creates a new form of digital inequality. Large charities with significant digital teams can afford to restructure their digital estates for AI visibility: implementing schema markup, building API endpoints, optimising for AI citations. Smaller charities risk being filtered out not because their work is less impactful, but because their digital presence doesn't meet AI systems' requirements for structured, easily parseable information.

There's also a reputational risk. AI systems don't always represent organisations accurately. If the information AI surfaces about your charity is outdated, incomplete, or drawn from unreliable third-party sources, you lose control of how people understand your work, and you may not even know it's happening.

Not all content is equally affected today. AI struggles to replace services people can use or act on: helplines, support groups, volunteer opportunities, interactive tools, real-time information, and transactional pages for donations and applications. AI more successfully replaces informational content: explanations of conditions or rights, general guidance, background information about causes, and educational content.

If your website is primarily informational, you're most vulnerable to AI displacement right now. If it provides services, tools, and actionable next steps, you're more resilient today. But that resilience has a shelf life. As AI agents become capable of browsing websites, filling in forms, and completing transactions on behalf of users, transactional content becomes vulnerable too. A donation page is safe from an AI Overview that summarises information. It's not safe from an AI agent that can navigate to it and complete the gift without the donor ever seeing your website.

What charities need to do now

Optimising informational content for AI to cite, and service pages for AI to find, is the right immediate priority. But the longer-term strategy can't simply be "make everything transactional." Charities will increasingly need to think about how their services work when accessed through AI agents, not just how their content reads when summarised.

The good news is that charities have shown resilience. Organic traffic growth is down, but not collapsed. Charities are adapting faster than many sectors. There are concrete steps any charity can take, falling broadly into two areas: making your content work for AI systems, and understanding how AI currently sees you.

Making your content work for AI systems

The shift from keywords to questions means your content strategy needs to start with what people actually ask. Look at your support emails, helpline logs, and social media messages. Structure content around those real questions, with clear direct answers in the first paragraph and evidence-based detail following. Use headings that reflect real questions. Make it easy for AI to extract and summarise accurately.

Structured data (sometimes called schema markup) tells AI systems what your information means. It's the difference between AI reading "We helped 1,000 people" and AI understanding "This organisation provided housing support to 1,000 individuals in 2025." This is technical work, but increasingly accessible. Tools like Google's Structured Data Markup Helper can generate the code, and some website platforms include structured data features. If you're working with developers or agencies, this should be a priority conversation, focusing on organisation schema that explains who you are, service schema describing what you provide and for whom, and event schema for activities and registration.

Your content also needs to demonstrate the expertise and trust that charities have. This means showing where content comes from: author bios, credentials, review dates, citations, expert review processes, and lived experience representation. If clinicians reviewed your health information, make that visible. If solicitors were involved in your legal guidance, say so. AI systems increasingly weight content that demonstrates genuine authority.

Beyond your own site, brand mentions matter more than they used to. AI systems increasingly draw on references to your organisation across the web, even without links. Social media presence, media coverage, being referenced in podcasts and discussions, appearing in sector conversations: these all contribute to how visible you are to AI. You can't control all of this, but you can influence it through PR, partnerships, and active participation.

Understanding how AI sees you

The simplest and most revealing step is to actually search for your organisation using AI tools. Ask ChatGPT about your charity. Search your services in Google's AI Mode. See what Perplexity says about your cause area and whether you appear. The results will tell you whether AI knows you exist, how accurately it describes your work, what sources it's citing, and where information is outdated or wrong. This isn't a one-time check. AI systems update regularly, and ongoing monitoring matters.

What this means for the people you serve

The shift to AI-mediated information access has serious implications for beneficiaries. If someone in crisis searches "mental health support near me," and AI summarises options without them visiting websites, several things can go wrong. AI might miss specialised services that would be most appropriate, recommend services at capacity or with waiting lists, misunderstand eligibility criteria, or not convey urgency or safeguarding concerns.

For people already digitally excluded, AI adds another layer of complexity. Your website, for all its imperfections, is something people can learn to navigate. AI systems require different skills: knowing how to ask the right question, interpreting generated responses, recognising when something is wrong. That's a reason to maintain your website as an accessible, navigable resource - not to abandon it in favour of AI-first approaches. The people most at risk of being left behind are often the people charities exist to serve.

Most charities making decisions about AI don't actually know how their communities feel about it or whether they're using it. Are your service users already turning to ChatGPT for the kind of advice you provide? Are your volunteers comfortable with AI-assisted coordination, or does it put them off? Do the people you work with trust AI, fear it, or have no experience of it at all? A charity whose beneficiaries are digitally confident young people faces entirely different considerations from one working with older people still getting comfortable with email. The answers will vary enormously, and assumptions are dangerous. Find out.

The chatbot question

Many charities are implementing AI chatbots to handle common inquiries, triage service requests, or provide basic guidance. Some are doing this thoughtfully. WECIL, a disabled-led charity, created "Cecil from WECIL", a chatbot built around Easy Read design principles to make information more accessible to people with learning disabilities. Here, AI is explicitly designed to reduce barriers rather than create them, and it was developed by an organisation led by the people it serves.

But not all implementations are this considered. When chatbots replace the helpline worker who recognises distress in someone's voice, the advisor who asks follow-up questions that reveal underlying issues, or the person who builds rapport over multiple interactions, something important is lost. The distinction isn't chatbots versus no chatbots. It's whether the implementation centres the needs of the people using it, or the efficiency goals of the organisation running it. Human alternatives must remain accessible and visible, not hidden behind multiple failed attempts to use AI.

Co-design, not afterthought

Goose, an AI marketing platform built for heritage organisations in partnership with the Arts Marketing Association and funded by the National Lottery Heritage Fund, is a good example of what co-design looks like in practice. There was real scepticism about AI in heritage: concerns about job losses, authenticity, craft. Rather than building the most obvious solution and hoping people would adopt it, the team spent months in twice-weekly co-design sessions with a small core group of heritage partners, before beta-testing with over 40 more organisations — building and testing functional prototypes throughout. They built and discarded a basic chatbot (too shallow), a trusted-sources system (too brittle), a funding application assistant (too narrow), and a fully autonomous agent (too much delegation, as users wanted to retain control). The "thinking partners" concept that became Goose only emerged through this iterative process. It wouldn't have been designed correctly without the co-design, and given the sector's scepticism, it wouldn't have been adopted without it either.

Noise Solution CIC found something similar working with young people on AI analysis of reflection videos from music sessions. The technology worked effectively, but implementing it revealed a deeper challenge about trust. As they reflected: "Looking back, we would invest even more in participant co-design from the outset, ensuring that young people fully understand how AI analysis works, and feel confident about how their data is used." The trust required for young people to engage took longer to build than the technology itself.

The pattern is consistent: you can't design accessible AI services without involving the people who might be excluded by them. If your charity exists to reduce inequality, and your adoption of AI increases barriers for the people you serve, you have a fundamental misalignment between mission and method. The question to keep asking is simple: who benefits from this, and who is being left behind?

You can't design accessible AI services without involving the people who might be excluded by them.

Capacity and the digital divide

None of this is straightforward for small charities with limited digital resources. Restructuring your digital estate for AI visibility requires technical knowledge, ongoing maintenance, and resources many organisations don't have. This is where sector infrastructure matters. Collective investment in shared tools, templates, and training could prevent a digital divide where only large charities remain visible to AI systems. Organisations like CAST, Charity Digital, and Charity Excellence Framework are working on this, but the pace of change is fast and the gap risks widening.

This isn't just a technical question about search rankings. It's a justice question about who has access to support. The organisations starting to think about this now will be better placed as AI becomes how more people find charities. Even small steps, like structuring your content for AI or checking how AI describes your organisation, can make a real difference.

This isn't just a technical question about search rankings. It's a justice question about who has access to support.

Part 3: Inside your organisation

We cover the functional areas - fundraising, service delivery, communications, data - in detail later. We've chosen that focus because there's immediate value in those areas and the barriers to getting started aren't too high.

But AI raises bigger questions that go beyond individual and team-level applications. Questions about how your organisation works, how you plan, how you structure teams, how you make decisions under uncertainty. This section is less about AI specifically and more about leading an organisation when the ground is shifting.

McKinsey's 2025 State of AI research offers some useful benchmarks. Eighty-eight percent of organisations now use AI in at least one business function, up from 78% a year earlier. But more than 80% aren't yet seeing meaningful impact at an organisational level. The gap isn't about technology, it's about how organisations are adapting. The single biggest factor in whether organisations see real returns is redesigning workflows. Yet only 21% have done this, whilst most are still overlaying AI onto existing processes (McKinsey, March 2025).

10.Managing risk

Not every AI use carries the same risk. Drafting a newsletter is different from processing safeguarding referrals. Summarising meeting notes is different from triaging service users. The challenge is that most charities don't have a structured way to think about this, so either everything gets treated as high-risk (and nothing happens) or everything gets treated as low-risk (and something goes wrong).

A simple framework helps: what could go wrong, who could be harmed, how would we know if it was going wrong, and what's our fallback if it fails? Higher stakes need more oversight. Lower stakes can move faster. The key is being deliberate about which category you're in, rather than applying the same level of caution to everything.

What different risk levels look like in practice

At the lower end, individual productivity tasks like using AI to draft emails, summarise documents or brainstorm ideas carry limited risk as long as a human reviews the output. That's the consistent pattern across the case studies in this playbook: AI drafts, transcribes, or tags, and a person checks the result before it goes anywhere. That human review step is what keeps low-risk applications genuinely low-risk.

In the middle, internal workflows that touch organisational data or involve multiple people need more thought. SCVO's approach to meeting transcription is a good example of proportionate governance. They chose to use Microsoft Teams' built-in transcription with their organisational Copilot account rather than third-party tools, specifically because it kept data within their existing Office 365 environment. They developed a retention policy for raw transcripts, sought consent before recording, and explained the process to participants up front. But they were also clear about limits: they wouldn't use this for high-stakes calls where accurate records were critical. That kind of honest boundary-setting, knowing where your approach is good enough and where it isn't, is more useful than blanket policies.

At the higher end, anything that touches the people you serve directly needs the most careful handling. Noise Solution CIC's work analysing reflection videos from young people in music sessions shows what rigorous governance looks like: staff check, review and contextualise all AI outputs before they're used, there's a formal governance stage before results are shared or reported, and the team is actively working on prompt-based bias mitigation to ensure responses are informed by equity and diversity considerations. This level of oversight takes time and resource, but it's proportionate to the stakes. These are vulnerable young people's reflections on their own wellbeing.

The closer AI gets to beneficiaries, the more attention it needs

Off-the-shelf tools like ChatGPT and Claude are general-purpose. They're not built for your specific safeguarding requirements, your service users' needs, or your regulatory context. For internal productivity, that's usually fine. For high-stakes interactions with the people you serve, you may need something more considered: AI that's been tested against real scenarios, constrained to verified information, and designed with humans in control of the decisions that matter.

Breast Cancer Now's transcription system is an example of this. Processing survey data from cancer patients across 20+ NHS trust form variants requires 99% accuracy. A general-purpose chatbot isn't appropriate. The system was engineered specifically for that context, with different AI approaches used for different parts of the process depending on what each step required in terms of speed, accuracy, cost, and consistency.

Drawing clear lines

Most organisations find it useful to establish some non-negotiables early: AI won't replace human support in crisis situations, won't make decisions about access to services without human involvement, won't interact with people in ways that disguise what it is. These aren't just governance statements, they're design constraints that shape what you build and how you build it.

Once you're clear on what's off the table, other decisions get easier. The space between "definitely fine" and "definitely not" is where judgment lives, and that judgment gets better with experience. Start with lower-risk applications, learn how AI behaves with your data and in your context, and build confidence before moving to higher-stakes uses.

11.Disclosure and accountability

When we wrote the first edition, transparency about AI use felt like a significant ethical question. Should you tell people if AI helped draft that appeal? Does AI-generated content need labelling?

A year on, things have moved. AI is now embedded in so many tools and processes that drawing a clear line around "AI-generated content" is increasingly difficult. Guaranteeing anything as AI-free is becoming harder. If someone uses Copilot to restructure an email, Grammarly to polish the language, and then edits it themselves, what percentage is "AI-generated"? The question doesn't really have a useful answer.

So the more practical question is what actually matters to the people we serve? Some things probably don't need flagging, like using AI to tidy up grammar or structure a first draft. Some things clearly do. If someone thinks they're talking to a person and they're actually talking to a chatbot, that needs to be clear. If AI is influencing decisions about who gets support or how cases are prioritised, people have a right to know. If you're using AI to personalise communications in ways that might feel manipulative if people knew, that's worth examining.

The Wildlife Trusts found that being upfront about AI use actually strengthened trust. When they used AI image generation to visualise rewilded landscapes for early-stage fundraising, they clearly labelled all outputs as AI-generated. Some funders were initially concerned about "fake" images, but clear labelling combined with expert ecological validation meant people engaged with them as conversation starters, not finished plans. Transparency made the work more credible, not less.

The British Museum's experience in January 2026 shows how badly this can go without proper review. The museum shared AI-generated images on social media showing a woman contemplating exhibits. Archaeologists quickly spotted the images were artificial and raised concerns about cultural representation: the AI figure appeared in traditional East Asian clothing in some images but wore Mexican-style attire while looking at an Aztec artefact, as if all cultures were interchangeable. The museum deleted the posts, then made things worse by unfollowing critics. The damage wasn't the AI use itself. It was the absence of review before posting and the defensive response afterwards.

A useful test is: would the person on the other end want to know? If they'd feel deceived or manipulated by not knowing, tell them. If they genuinely wouldn't care, you probably don't need to flag it. The principle isn't about disclosing every use of AI. It's about maintaining the trust that your organisation depends on.

12.Data as foundation and risk

Most useful AI applications need decent data to work with. That doesn't mean perfect data, but data you can find, understand, and trust enough to act on.

Most charities we work with have data scattered across systems, inconsistent formatting, duplicate records. What counts as a "donor" or "active supporter" means different things to different teams. Everyone aspires to a "single source of truth" but in reality many organisations aren't there yet.

The problem goes beyond blocking AI adoption. AI working with bad data produces confident, plausible, wrong results. If your CRM is full of duplicates and your AI is identifying "lapsing donors," some of those predictions will be wrong in ways that waste effort or damage relationships. If your case notes are inconsistent and AI is summarising them, the summaries will inherit the inconsistencies. AI doesn't fix messy data, it amplifies it.

AI doesn't fix messy data, it amplifies it.

There's a related opportunity that's easy to overlook. Most charities collect far more qualitative data than they ever use. Free-text survey responses, feedback forms, case notes, interview transcripts, open-ended evaluation questions. This material is rich with insight, but it's historically been too time-consuming to work with properly. Extracting themes from hundreds of open-ended comments by hand takes days that most teams don't have, so the data sits in folders or gets reduced to a few hand-picked quotes in a report.

AI changes the economics of this. It can surface patterns across large volumes of qualitative data in hours rather than weeks. The Brilliant Club found this when using Miro's AI tools to analyse hundreds of open-ended comments from students, teachers and tutors, saving significant analysis time on work that would previously have been prohibitively slow. WaterAid's Performance and Insight team ran a similar exercise using Google Colab to analyse 150 supporter survey responses alongside demographic data, turning weeks of potential manual analysis into a single workshop.

The bigger prize is connecting qualitative and quantitative data in ways that weren't practical before. You might know from your CRM that 200 service users dropped off in the last quarter. But the reasons why are buried in case notes, feedback forms and exit surveys that nobody has time to read systematically. AI can bridge that gap, turning unstructured text into something you can analyse alongside your numbers. The result is a fuller picture of what's actually happening, not just what's easy to count.

This plays out the same way across the sector. Charities that rushed into AI tools without sorting their underlying data found the AI confidently producing nonsense. The investment in data quality isn't glamorous, but it's what makes everything else possible.

The good news is that AI is making data cleaning itself more approachable. Tasks that once required specialist skills or expensive consultants can now be tackled by people closer to the work. You can use AI to find duplicates, standardise formats, spot inconsistencies and clean up years of messy data entry. Progress is possible from wherever you're starting. The important thing is to start, and to be honest about where you are.

13.Skills and structure

AI is democratising certain capabilities. Data analysis that once required specialists can now be done by people closer to the work. Internal tools that traditionally needed developers can be built by people who aren't technical. Product design and build is becoming more accessible to smaller teams.

What does this mean for how you structure teams? If a fundraiser can query the CRM in plain English, what happens to the data analyst role? If a programme manager can build a simple app to track outcomes, do you still need the same development capacity? If AI can produce a first draft of almost anything, what happens to the junior roles where people learned to write?

These questions don't have settled answers yet, but there are early signs of how roles shift in practice. In the NHS's largest AI scribe trial, covering 17,000 patient encounters across nine London sites, AI handling clinical documentation gave clinicians 23.5% more time for direct patient interaction. The role didn't shrink — it shifted toward the work that matters most. Street Support Network found the same with meeting coordination: the real gain wasn't the two hours saved per meeting, it was the shift from managing logistics to being fully present in conversations.

The pattern is that AI tends to absorb the mechanical parts of a role and leave the parts that require judgment, creativity and human connection (McKinsey, November 2025). The skills that matter shift from execution to judgment. Not "can you write this?" but "is this good enough?" Not "can you build this?" but "should we build this?" Not "can you analyse this data?" but "what does it mean and what should we do about it?"

New roles are emerging too. In the corporate world, AI Ethics Officer, AI Governance Specialist, and Responsible AI Lead are now established job titles, typically sitting across compliance, privacy, or legal teams. Most charities aren't hiring dedicated roles yet, but the functions still need to exist somewhere. Someone needs to be thinking about what data can go into AI tools, how outputs are reviewed, whether the organisation's use of AI is consistent with its values, and what happens when something goes wrong. In larger charities that might eventually mean a dedicated role. In smaller ones it might mean adding AI governance to an existing remit and making sure the person has time and support to do it properly. The risk is the same as with strategic leadership: if it's everyone's job, it's nobody's.

Street Support Network's advice is to treat AI "like a junior team member: it needs a proper induction, ongoing supervision, and clear boundaries." That framing is useful because it suggests a relationship that evolves as trust builds, rather than a tool you switch on and leave alone.

For now, it's probably worth investing in AI fluency across the organisation. Not deep technical skills, but enough understanding that people can use tools effectively and spot opportunities in their own work. Significant reskilling is expected over the next few years, and organisations that start now will be better placed than those that wait.

14.How work changes

The obvious use of AI is speeding up tasks you already do. Draft this email faster. Summarise this document quicker. That's real value, but it's not the whole picture.

Redesigning a workflow means rethinking how work moves through your organisation, not just making individual steps faster. It might mean changing who does what, removing steps that only existed because of human bottlenecks, or combining tasks that used to be separate. If your grant application process involves a fundraiser drafting, a manager reviewing, a director approving, and a designer formatting, AI doesn't just speed up the drafting. It might mean the fundraiser can now produce something closer to final, reducing the review loops entirely.

What workflow change actually looks like

Blood Cancer UK's user research workflow used to follow a familiar pattern: record an interview, spend two or more hours manually transcribing it, read through repeatedly to identify themes, manually tag and code the data, write up a summary, and share it as a document that might or might not get read. With AI handling transcription and initial theme tagging through Dovetail, the mechanical steps collapsed. But the interesting shift wasn't just speed. The researchers found themselves with time to do something they'd never had capacity for: creating short video insight reels drawn from the interviews themselves. Seeing and hearing real service users moved senior leaders to act in ways that written reports never had. The workflow didn't just get faster. It changed shape entirely, and the output became more valuable.

Street Support Network's meeting workflow tells a similar story at a smaller scale. Before, the cycle was familiar to anyone in the sector: back-and-forth emails to find a time, the meeting itself where you're half-listening and half-taking notes, hours afterwards writing up actions and follow-ups. They built a three-stage workflow using existing tools: Calendly handles scheduling automatically, Krisp records and transcribes during the meeting, and an LLM processes the transcript afterwards into a summary with actions and follow-ups. The time saving averages two hours per meeting, but as they reflected, "the biggest surprise was how much mental energy was freed up. It's not just the time saved - it's the elimination of constant scheduling stress and the confidence to say 'I'll send you that briefing this afternoon' knowing you can actually deliver."

Where the human effort moves

It's worth thinking carefully about where the work shifts to. AI might handle the first draft, but someone still needs to judge whether it's good enough. AI might summarise the case notes, but someone still needs to decide what to do. AI might identify the at-risk donors, but someone still needs to build the relationship. The work doesn't disappear - it shifts from execution to judgment, from processing to decision-making, from logistics to relationships.

The work doesn't disappear - it shifts from execution to judgment, from processing to decision-making, from logistics to relationships.

Understanding where work shifts to matters because it affects resourcing. If AI handles the drafting but creates more need for quality review, you haven't saved time - you've moved it. If AI surfaces insights but nobody has capacity to act on them, the investment is wasted. The organisations getting value from AI aren't just automating tasks. They're thinking about the whole workflow and making sure the human parts are properly resourced too.

Most organisations are still overlaying AI onto existing processes, making individual steps faster without rethinking how the work flows. That's a start, but it's not where the real value lies.

15.Buy, build or wait

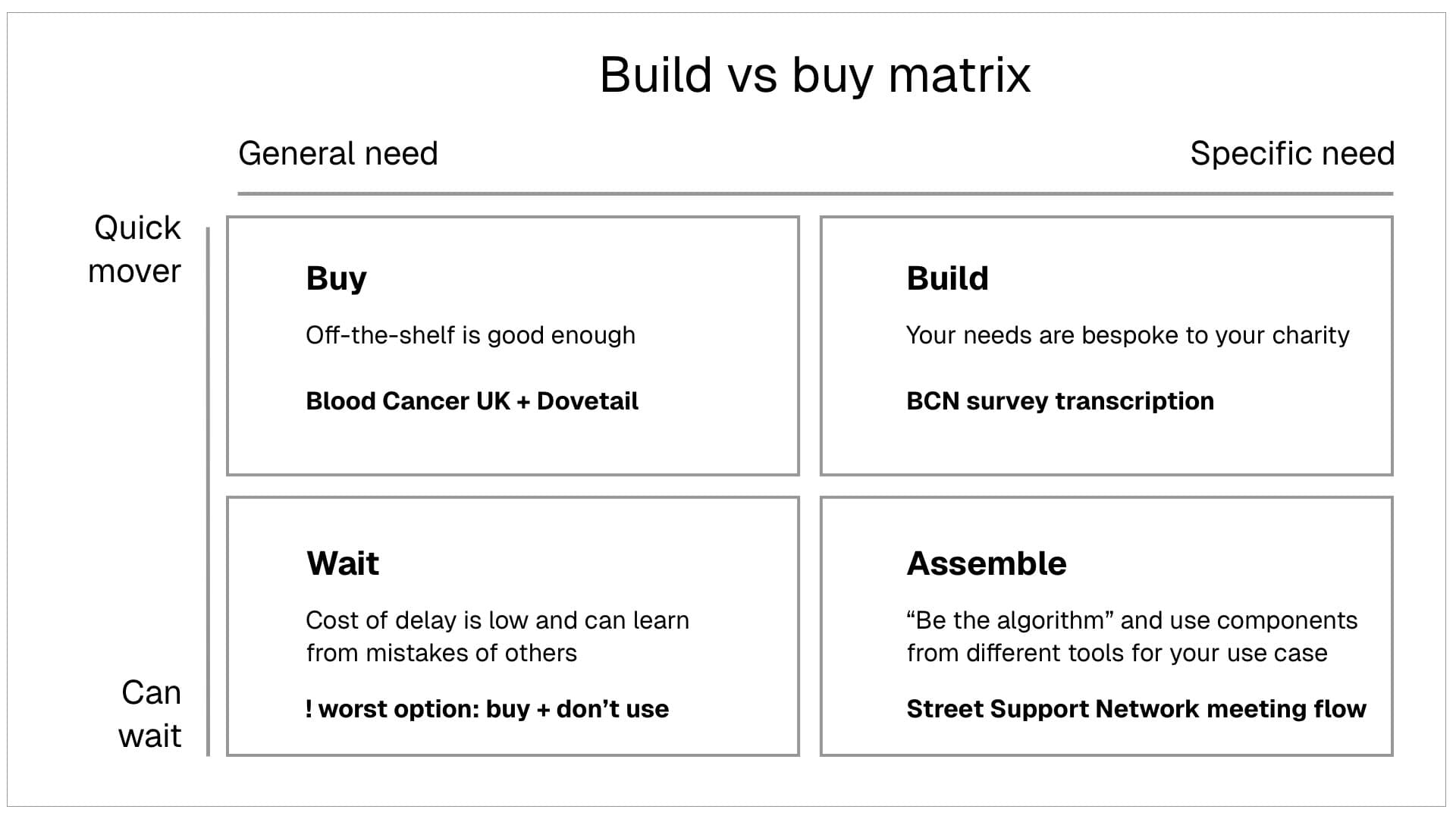

Do you adapt your processes to fit commercial software, or do you pursue something custom that fits your processes?

Charities have historically leaned toward buying. The costs of custom development were prohibitive for all but the largest organisations. Better to compromise on fit than spend money you don't have on building something bespoke.

That calculation is changing. The costs of custom development are falling fast. What once required a team of developers over months can sometimes be built in days. Tools like Claude Code mean that people with some technical comfort but no formal development background can create functional applications. Custom is becoming accessible to organisations that couldn't have considered it before.

But custom still requires maintenance, expertise, and ongoing attention. If you build something, you own it - including when it breaks, when it needs updating, when the person who built it leaves. There's a reason off-the-shelf exists.

The CAST experiments show all three approaches working. Blood Cancer UK bought Dovetail, an existing research platform with AI features, rather than building custom tools. The fit was good enough and the team could start getting value quickly. The Brilliant Club used Miro, a tool they already had, when it added AI clustering features - no new procurement, no new budget line, just experimenting with capabilities that appeared in something they were already paying for. Street Support Network took a different route, assembling a meeting workflow from free and low-cost tools: Calendly for scheduling, Krisp for transcription, and LLM access for processing. None of the individual tools were custom, but the workflow they built by connecting them was.

Knowing when to buy, when to build, and when to wait is part of the leadership challenge. There's no formula, but some principles help: buy when the off-the-shelf is genuinely good enough and you need to move quickly, build when your needs are specific and the fit matters more than the convenience, assemble from existing tools when the components exist but nobody's put them together for your use case, and wait when you're uncertain and the cost of delay is low. The worst option is usually buying something expensive and then not using it, which is what happened to several charities that invested in Copilot licences before their internal data was ready to support it.

The worst option is usually buying something expensive and then not using it.

This is also changing how charities work with digital agencies. Many organisations have spent years in a cycle of writing requirements, commissioning vendors, managing backlogs, and waiting for sprint cycles to deliver. The overhead of managing that relationship often rivals the cost of the work itself, and product owners spend more time translating between the organisation and the delivery partner than thinking about what users actually need. AI is starting to shift this. Requirements become easier to articulate when you can generate wireframes or build a prototype that shows what you need rather than describing it in a brief. Things that used to require a support ticket and a two-week wait - a content update, a minor feature change, a data export - can increasingly be handled internally. RSPCA Coventry built a custom API feed for their website using AI-assisted development over about 40 hours, and found the result outperformed what human developers had delivered to the same brief. That doesn't mean agencies become irrelevant. Architecture decisions, security, scalability, and deep technical judgment still require specialist expertise. But the boundary shifts from 'we need you to build everything' toward 'we need you for the complex, high-stakes work.' That's a different commercial model and a different kind of partnership.

There's a subtler shift too. When development capacity was scarce and expensive, backlogs prioritised themselves. You could only afford to build the most important things, so the hard choices were partly made for you. As it becomes faster and cheaper to build, the question moves from 'can we afford this?' to 'should we build this?' That requires stronger product thinking, clearer prioritisation, and a willingness to say no to things that are possible but not valuable. The constraint shifts from capacity to judgment.

Where DIY ends

It's worth being honest about what AI-assisted development can and can't do. Tools like Claude Code and Cursor are genuinely powerful for prototyping, building simple internal tools, and automating straightforward workflows. If you need a form that saves to a spreadsheet, a dashboard, or a script that cleans up your data, someone on your team can probably build it.

But there's a gap between a working prototype and a reliable system. Connecting multiple data sources, handling edge cases, building something that works at scale, maintaining it when the person who built it moves on - these are different problems. The Breast Cancer Now transcription system processes 20+ NHS trust form variants at 99% accuracy, combining multiple AI approaches for different parts of the pipeline. That's not something you can vibe code in an afternoon. Goose went through months of iterative development with heritage organisations — a small core in deep co-design, extended to 40+ in beta testing — before the right approach even became clear. The front end might be buildable with AI assistance. The architecture, the data design, the understanding of what to build in the first place - that still requires experience.

The risk isn't that people try to build things themselves. That's good, and more of it should happen. The risk is that organisations assume everything is now DIY, hit a wall, and conclude AI doesn't work for them. Know which category your problem falls into before you start.

16.Innovation culture

What does innovation look like in a charity? You can't move fast and break things when trust is your currency and the consequences of failure could be felt by your beneficiaries and supporters.

You can't move fast and break things when trust is your currency.

But there's also a cost to not trying. Things may not settle for years, and the organisations learning the most are the ones experimenting now, even in small ways.

So what does innovation culture in a charity look like? It probably means permission to experiment within boundaries, safety to fail on small things, time carved out for learning, clear lines around what's off limits. It means celebrating what you learned from things that didn't work, not just celebrating successes. It means leaders who are curious rather than certain, who ask questions rather than just give answers. Virgin Money Foundation took this approach: their AI education was framed around helping people evaluate whether AI aligned with their values, not around persuading them to adopt it. The team moved from experiencing AI as external pressure to seeing it as something they could thoughtfully assess. That distinction - exploration rather than obligation - matters.

It also means being honest about constraints. Most charities don't have spare capacity sitting around waiting to be deployed on innovation. Experimentation has to happen alongside the day job, which means it needs to be protected time, not just good intentions. If it's not in the plan, it won't happen.

The culture question is real but it's not everything. You can have the most innovative culture in the sector, but if nobody has time to experiment, nothing will change. Culture enables innovation but resources make it possible.

17.Strategic leadership

AI doesn't belong to one person. It cuts across the organisation: HR is navigating skills gaps and changing roles, finance is making investment decisions, operations is seeing workflows shift. This needs to be a senior leadership team conversation, not something delegated to whoever seems most technical.

That's because AI implementation is about change management, not technology. It requires people who can make resource decisions, bring different parts of the organisation together, and maintain focus when priorities compete.

AI implementation is about change management, not technology.

The pace of change makes this harder. The person leading on AI needs to be watching the horizon, not chasing every new tool, but understanding when something significant shifts. The jump from "AI that suggests text" to "AI that builds applications" happened in months. Someone in your organisation needs to notice when these shifts create new opportunities or make previous decisions obsolete.

What leadership support enables

The most useful thing leadership can do is create space for experimentation, then pay attention to what comes back. An AI telephone assistant called Dora is now used by multiple NHS trusts across the South East for cataract follow-up calls. At Frimley, it cut the time between surgery and follow-up contact from 10 weeks to two. In Hampshire and Isle of Wight, average waits for low-complexity cataract surgery fell from 35 weeks in January 2024 to 10 weeks or less, with Dora credited as one contributor alongside virtual clinics and one-stop cataract pathways (NHS England, October 2025). That progression from single-trust trial to regional adoption only happens when leadership treats initial experiments as learning exercises, not just efficiency measures. If your board wants a practical starting point of their own, the board paper summarisation and strategic challenge recipes can help trustees engage with AI directly.

There's also a question of ambition. Most AI adoption in charities is still tactical: making existing work a bit faster, automating small tasks, saving time on admin. That's a reasonable place to start, but it won't transform what your organisation can achieve. The strategic leadership question is bigger: what could you do differently if AI worked well? What would it mean to reach twice as many people without doubling the team? The organisations asking these questions at board level, not just approving tools, are more likely to see real impact.

Planning when the ground keeps shifting

The five-year strategy has always been the backbone of charity planning. But things are moving too fast for traditional planning cycles. By the time you've written the digital strategy, consulted stakeholders, and got board sign-off, the tools have changed. The capabilities available when you finish the plan aren't the capabilities you planned for.

One approach is to hold goals firmly but methods loosely. Be clear about what problems you're trying to solve, but stay flexible about how you solve them. Build in regular review points - quarterly rather than annual. The organisations doing this well tend to have a roadmap that's explicit about what they're testing now, what they're watching, and what they've decided to leave alone for now. That's planning that creates room for learning rather than locking in approaches that might be overtaken.

This requires a different relationship with uncertainty. Not everything will work. Some investments won't pay off. The point isn't to get it right the first time - it's to learn fast enough that the mistakes are small and the wins compound. Street Support Network's advice applies here too: "You don't have to try to implement everything at once." Start with one element, learn from it, then build on what works.

The risk is that AI becomes everyone's responsibility and therefore nobody's. Or that it sits with IT when the real opportunities are in service delivery. Where AI sits matters - it's not purely a technology question.

Some charities are addressing this directly. The British Heart Foundation established an AI working group in 2023, alongside a wider community of AI users across the organisation and a formal AI strategy. They appointed a Chief Technology Officer and created a Technology Directorate, making it clear that AI sits at organisational level, not within a single team. More recently they ran structured workshops across every directorate to identify and prioritise AI use cases, ensuring that opportunities were grounded in each team's actual pain points rather than imposed from the centre. The Charity Commission highlighted BHF's approach as an example of what good looks like.

Not every charity needs a working group or a CTO. But someone needs to own the question of how AI is being used, where the risks are, and whether the organisation is learning from what it's trying. The 2025 Charity Governance Code now explicitly includes AI oversight within its Managing Resources and Risks principle. At minimum, that means boards should be asking: who is responsible for this, and are they resourced to do it properly?

There's also a question about leadership behaviour. If senior leaders aren't using AI themselves, aren't curious about it, aren't visibly learning, that sends a message. The charities where AI is gaining traction tend to have leaders who are experimenting alongside their teams, asking questions, and being honest about what they don't yet understand.

18.The investment question

At an individual level, the case is straightforward. A subscription costs around £20 per month. If the tool saves 30 minutes a week, it's paid for itself.

Organisational return is harder to pin down. When AI is embedded into workflows or services, the gains are real but tangled up with other changes. Breast Cancer Now's AI transcription service can scale cost-effectively while also increasing what it can deliver. That's not a simple productivity calculation, but it is a real one.

What investment looks like in practice

It helps to think in tiers.

At the low end, many AI features are now built into tools charities already use, or available on free tiers. The Brilliant Club experimented with Miro's AI features using the 10 free credits per user per month. The financial cost was zero, but they still needed time for prompt refinement and learning what worked. Even free tools have adoption costs.

At the team level, dedicated tools start to show clearer returns. Blood Cancer UK's Dovetail subscription costs around $45 per month per user. With two users in the UX team and free access for the rest of the organisation to view reports, the annual cost is roughly £1,000. The return: faster insights, better communication with senior leaders, and a UX function with more influence across the organisation.

At the workflow level, the investment shifts from subscriptions to design and integration time. Street Support Network assembled a meeting workflow from Calendly, Krisp, and LLM access. The individual tool costs are low or free, but making them work together required thought and iteration. The return: around two hours saved per meeting, plus what they described as "the elimination of constant scheduling stress." At 100 meetings a year, that's 200 hours of capacity.

The hidden costs

The subscription fee is rarely the real cost. Staff time to learn, process redesign, change management, building organisational confidence, ongoing quality review: these add up. The Brilliant Club found that even with free tools, "prompt refinement investment" was significant. Street Support Network's advice: "Don't try to implement everything at once. Start with just one element of a workflow."

When real-world infrastructure meets AI ambition

Breast Cancer Now's experience surfaced a different kind of hidden cost. The AI transcription system can handle complex survey forms accurately and quickly. But the existing scanners can only process a handful of forms at a time, creating a new bottleneck upstream. A bit of a reality check.

This is why proof of concept work matters. You find out what actually gets in the way before you've committed significant budget. The AI worked as promised, but getting the full benefit means the rest of the process needs to adapt. For any charity exploring AI, it's worth looking at the whole pipeline, not just whether the AI can do the job. Sometimes the real cost includes upgrading a scanner.

Three levels of investment

Individual productivity is relatively cheap: subscriptions, some protected time for learning. Quick wins, but limited organisational impact.

Capability building is different. Investing in people's skills, confidence and judgment takes time and often external support, but builds an organisation that can identify and act on opportunities as they emerge. We'd like to see funders supporting this more actively.

Investing in specific workflows and services can mean higher upfront costs, but the return becomes clearer: this service now costs less to run, or reaches more people.

Why AI doesn't fit traditional models

AI doesn't fit traditional digital investment models. Subscriptions are ongoing. The hidden cost in staff time (experimentation, learning, managing change) is significant. It's less like buying a CRM and more like ongoing capability investment.

And there's the cost of not investing. As the gap widens between organisations building AI capability and those that aren't, the cost of catching up later only increases.

19.Governance

You need enough governance to enable safe experimentation, not so much that it paralyses everything. At minimum, you need clarity on: what tools are approved, what data can and can't go into AI systems, where human review is required, who can approve new uses, and how to raise concerns. Clear enough that people can make sensible decisions without asking permission for everything.